AI Detector Text Accuracy is a topic I care about because I’ve seen real people get blamed for “using AI” when they didn’t. I’ve also seen students panic after uploading their own writing and getting a scary score. And I’ve seen professionals waste time rewriting perfectly fine content just to make a detector happier. That’s frustrating, and in my opinion, it’s a big reason we need to talk about how these tools actually behave.

AI Detector Text Accuracy sounds like it should be simple: “Is this text AI or human?” But real language doesn’t work like a barcode. Human writing can be clear, repetitive, formal, or simple. AI writing can also be messy, personal, and full of mistakes. So when a detector gives a percentage, that number often feels more confident than it deserves.

In this guide, I’ll explain AI Detector Text Accuracy in plain language. I’ll show why results are often wrong, what causes false positives, and how I think you should use detector scores in a practical way. I’ll also add my opinion, ratings, bullet-point tips, and an FAQ. ✅

What AI Detector Text Accuracy Really Means 🎯

AI Detector Text Accuracy usually means: how often a detector labels text correctly. But in real life, accuracy has two big sides:

- False positives: human writing labeled as AI ❌

- False negatives: AI writing labeled as human ❌

Most people only worry about false negatives. I worry more about false positives because that’s where unfair accusations happen.

Also, AI Detector Text Accuracy depends on:

- language level (simple vs advanced writing)

- topic (technical vs casual)

- editing (human-edited AI and AI-edited human)

- length (short text is harder to judge)

- style (formal writing can look “AI-like”)

So a single score doesn’t tell the full story.

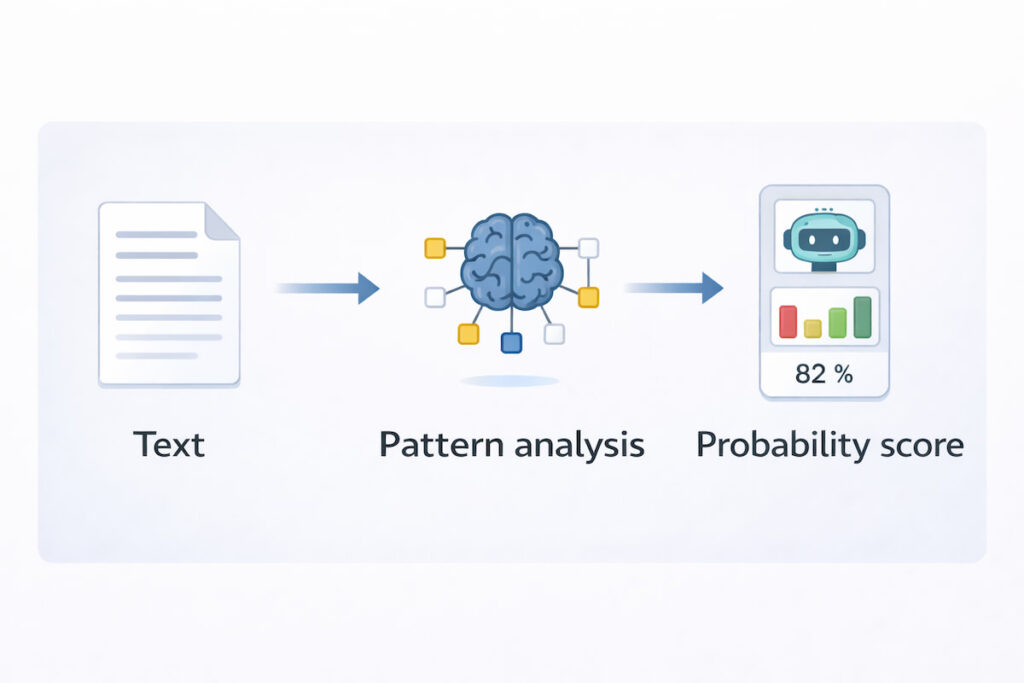

How AI Detectors Usually Work (Simple Version) 🧠

Most detectors look for patterns in text. They don’t “see” who wrote it. They estimate likelihood.

Common signals detectors use:

- predictability: very smooth, predictable sentences can look AI-like

- burstiness: humans often mix short and long sentences

- repetition: repeated patterns can look machine-generated

- rare word use: unusual word choices may change the score

- structure: overly perfect formatting can trigger suspicion

Here’s the important thing: humans can write predictably too. Especially students, non-native writers, and anyone following a formal template.

That’s why AI Detector Text Accuracy becomes tricky.

AI Detector Text Accuracy Problem #1: Human Writing Can Look “Too Clean” 🧼

This is the biggest cause of wrong results in my experience.

If you write:

- short sentences

- clear grammar

- simple vocabulary

- direct structure

…some detectors think it’s AI, even if it’s fully human.

Why? Because detectors often treat “smoothness” as a warning sign. But smooth writing is also a sign of good editing. That means AI Detector Text Accuracy suffers when writing is clear.

My take: If a detector punishes good clarity, it’s not judging authorship. It’s judging style.

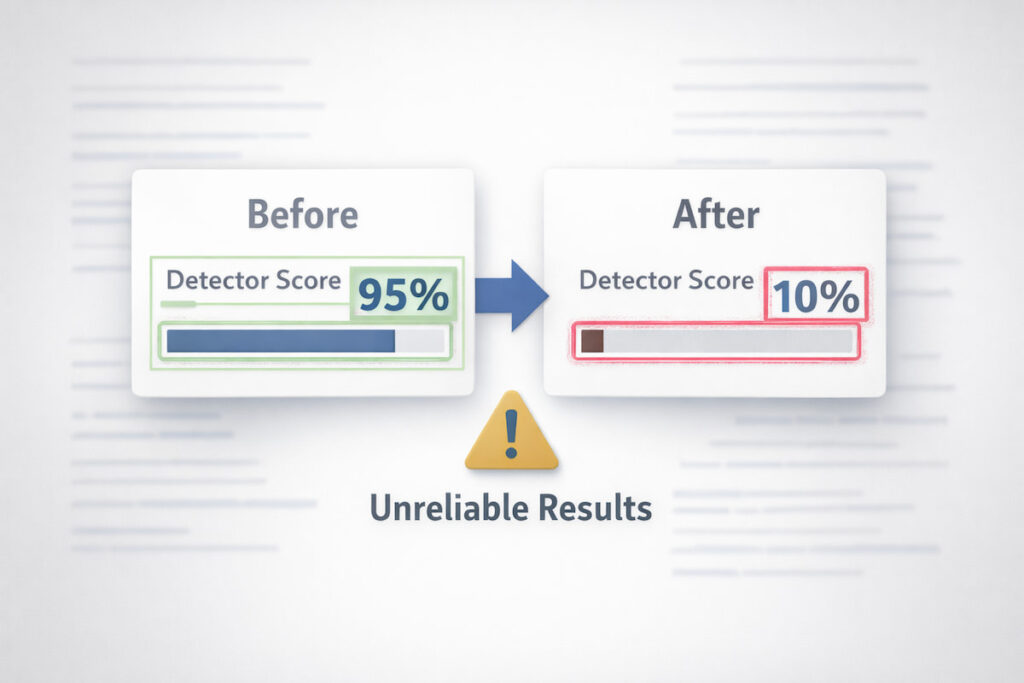

AI Detector Text Accuracy Problem #2: Editing Confuses Detectors ✍️

Editing breaks detectors fast. Let’s say you wrote a paragraph yourself, then you:

- fix grammar

- remove repetition

- improve flow

- add transitions

Now the text becomes more polished, and detectors may claim it’s AI.

Or the opposite:

- you paste AI text and rewrite it heavily

- you change sentence structure and word choice

- you add personal examples

Now the detector might say “human.”

So AI Detector Text Accuracy drops because real writing is not static. People edit. People rewrite. People revise. That’s normal.

AI Detector Text Accuracy Problem #3: Detectors Struggle With Short Text 🧩

Short samples are harder to judge. A single paragraph doesn’t contain enough style signals.

If you test:

- one tweet

- one short email

- one paragraph

- one intro

…you can get random-looking scores. That’s why AI Detector Text Accuracy improves slightly with longer text, but still isn’t reliable enough to treat as proof.

My rule: The shorter the text, the less I trust detector scores.

AI Detector Text Accuracy Problem #4: Non-Native English Gets Hit Hard 🌍

This part matters a lot. Many non-native writers use:

- simpler sentences

- repeated sentence patterns

- common vocabulary

Detectors may misread that as “AI-like.” So AI Detector Text Accuracy can be unfair to ESL writers.

I don’t like that. Not because I’m emotional about it, but because it’s a real bias in how pattern-based systems behave. Clear, simple English should not be treated as suspicious.

AI Detector Text Accuracy Problem #5: Detectors Are Always Behind AI 🔁

AI writing changes fast. Models change fast. Writing tools evolve. Detectors try to catch up.

That means:

- a detector trained on older AI patterns may fail on newer AI text

- newer AI may look more “human,” reducing detection signals

- human writing influenced by AI tools may look more “AI-like”

So AI Detector Text Accuracy is not stable. It shifts over time.

AI Detector Text Accuracy Problem #6: “Percentages” Feel Like Proof (But Aren’t) 📉

This is the psychological trap.

When a detector says:

- “92% AI-generated”

…people treat it like a lab test. In my opinion, that’s a mistake. It’s closer to a guess based on patterns.

A high score can still be wrong.

A low score can still be wrong.

A medium score is often meaningless.

So the real issue is not only AI Detector Text Accuracy. It’s how people interpret scores.

AI Detector Text Accuracy: Where These Tools Can Still Help ✅

I’m not saying detectors are useless. I’m saying they’re often misused.

Detectors can be useful for:

- flagging content for manual review

- helping editors spot overly generic writing

- comparing drafts (before vs after)

- finding sections that look robotic and repetitive

So AI Detector Text Accuracy can be helpful as a signal, not as a verdict.

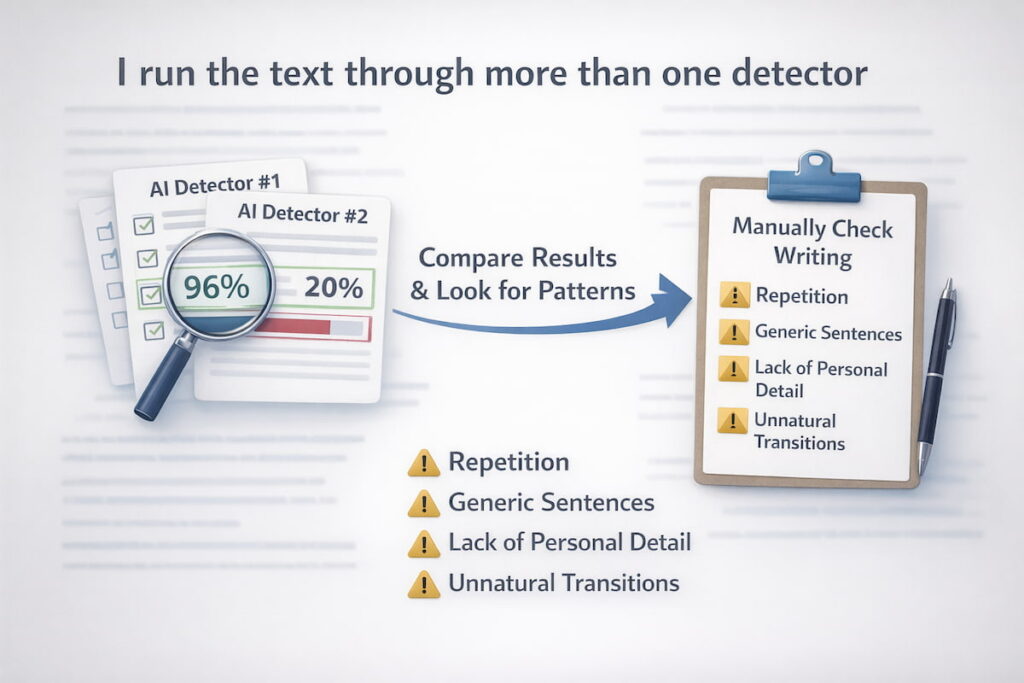

The Safer Way to Use Detectors (My Method) 🛡️

If I must use a detector, I follow a simple method:

- I run the text through more than one detector

- I compare results instead of trusting one score

- I look for patterns, not numbers

- I check the writing myself for:

- repetition

- generic sentences

- lack of personal detail

- unnatural transitions

If two tools disagree wildly, that tells me the score isn’t reliable. And it often happens.

My Opinion: AI Detector Text Accuracy Is Overtrusted 🗣️

Here’s my honest opinion.

I think AI Detector Text Accuracy is often overtrusted because people want an easy answer. Schools, clients, and managers want a simple “yes/no” tool. But writing is messy, and authorship detection is not clean.

I also think detectors are worst when used to accuse someone. If someone’s reputation, grade, or job depends on a score, that feels irresponsible. In high-stakes situations, detectors should be only one small part of a bigger review process.

If you want my real advice: use detectors to improve writing quality, not to punish writers.

Ratings: AI Detector Text Accuracy (Types of Detectors) ⭐

Instead of rating brand names, I’m rating types of detector setups, because that’s more honest and stable. Scores out of 10.

1) Single detector, one scan ⭐

- Reliability: 4/10

- Why: one tool can be wrong easily

2) Multiple detectors + compare results ⭐

- Reliability: 6/10

- Why: disagreement shows uncertainty, not guilt

3) Detector + human review (best practice) ⭐

- Reliability: 7.5/10

- Why: humans can spot context and author style

4) Detector + writing history (drafts, timestamps) ⭐

- Reliability: 9/10

- Why: real process evidence beats pattern guessing

So yes, AI Detector Text Accuracy improves a lot when you include real proof like drafts and revision history.

Signs a Detector Result Is Likely Wrong 🚩

If you see these, be careful:

- the text is very short (under 200 words)

- the writer is non-native English

- the content uses a formal template (reports, letters)

- the text was heavily edited

- the detector gives extremely high confidence without explanation

- different detectors give opposite scores

These are big AI Detector Text Accuracy warning signals.

How to Reduce False Positives (If You’re a Student or Writer) ✍️✅

If you’re worried about detectors, here are practical steps:

- Keep your drafts (Google Docs history helps)

- Add personal examples and specific details

- Avoid repetitive sentence openings

- Mix sentence lengths naturally

- Use your natural voice, not overly robotic phrases

- Don’t over-edit into “perfect corporate tone”

Also, keep a simple file of:

- outline

- rough draft

- final draft

That alone protects you more than any detector.

AI Detector Text Accuracy: Bullet Summary (Quick Takeaways) 📌

- AI Detector Text Accuracy is not “proof,” it’s a signal

- False positives are common, especially with clean writing

- Editing and short text confuse detectors

- Non-native English can be unfairly flagged

- Multiple tools + human review is safer

- Draft history is the strongest evidence

FAQ: AI Detector Text Accuracy ❓

1) What is AI Detector Text Accuracy?

AI Detector Text Accuracy is how well a tool can correctly identify whether text was likely written by AI or by a human. In real life, it’s limited because writing styles overlap and editing changes patterns.

2) Why does AI Detector Text Accuracy fail on human writing?

Because humans can write in ways that look “predictable,” especially in formal or simple writing. Also, strong editing can make human writing look more polished, which some detectors mistakenly label as AI.

3) Can AI Detector Text Accuracy be trusted for school or work?

I don’t think it should be used as final proof. It can be one small signal, but it should be combined with human review and evidence like drafts, notes, and writing history.

4) Why do different detectors give different results?

They use different pattern rules, training data, and thresholds. That’s why AI Detector Text Accuracy varies across tools, and why one score should not decide anything important.

5) How can I protect myself from false positives?

Save drafts and keep revision history. Add specific personal details. Avoid repetitive sentence patterns. If needed, show your outline and rough draft. Process evidence is stronger than any detector score.

6) Does rewriting AI text make detectors fail?

Yes, it often can. Heavy rewriting can make AI text look human. Also, heavy editing can make human text look AI. This is a major weakness in AI Detector Text Accuracy.